Neuro-Tech

[Deep Learning 101] Deep Learning Architecture and Training Principles (Part 2): Machine Learning Concepts and Deep Learning Models

Introduction

In the previous article, we explored the history of deep learning, which began with the emergence of artificial neural networks, as well as key events that drove its technological advancement. Through this, we were able to gain a deeper understanding of how deep learning has evolved.

Today, we will take a closer look at machine learning, a higher-level concept of deep learning, and deep learning models.

What is “Machine Learning,” the Higher-Level Concept of Deep Learning?

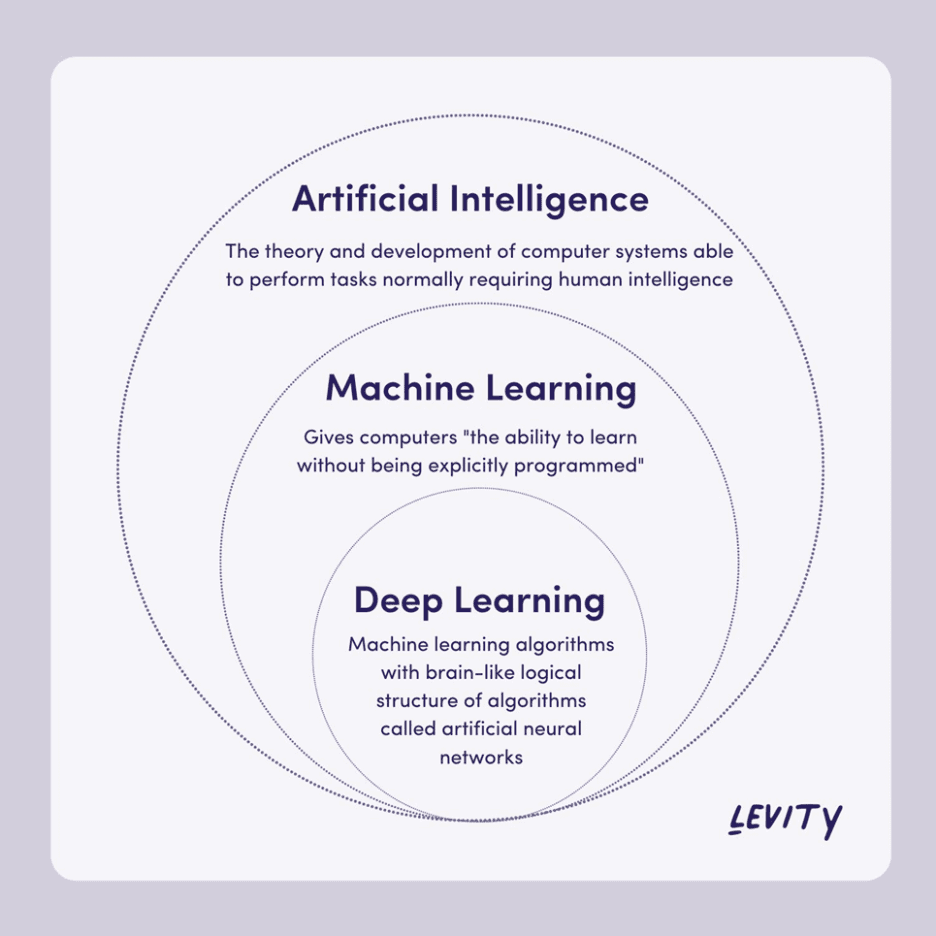

Both machine learning and deep learning are artificial intelligence (AI) technologies that learn based on data, and deep learning is categorized as a subset of machine learning.

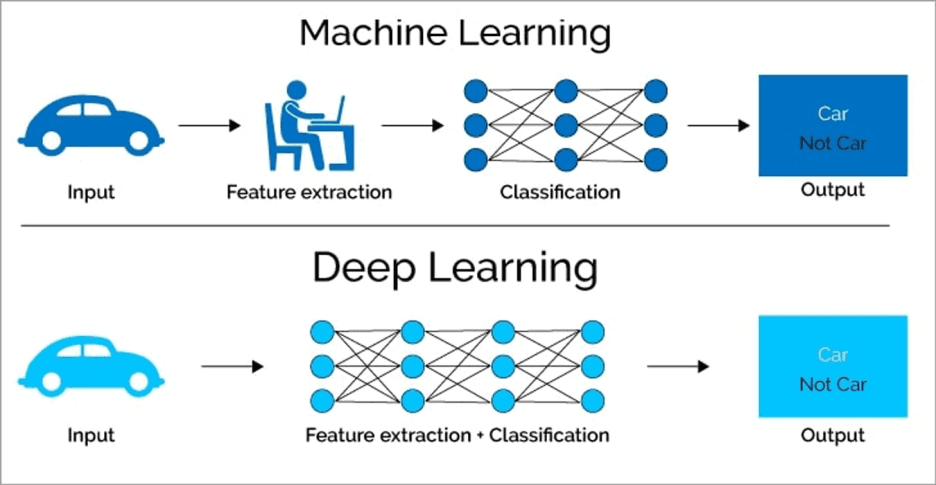

In machine learning, it is necessary for humans to manually define features. For example, to build a model that distinguishes between cats and dogs, humans must first determine which features are important, such as “ear shape” or “fur length.”

In contrast, deep learning learns useful features on its own from large amounts of data. The model identifies important patterns in the data without requiring humans to define each feature individually.

Machine learning can achieve good performance with relatively small amounts of data and computational resources, and it has the advantage of being easy to interpret. However, when solving complex problems, it is essential for humans to carefully design important features.

Deep learning, on the other hand, can learn complex patterns from large-scale data without requiring manual feature design. As a result, it has demonstrated outstanding performance in various fields such as image recognition, speech recognition, and natural language processing. However, it requires vast amounts of data and computational resources, and its training process operates like a black box, making it difficult to interpret and often time-consuming and costly.

What is a “Deep Learning Model”? – Understanding Its Structure and Training Method

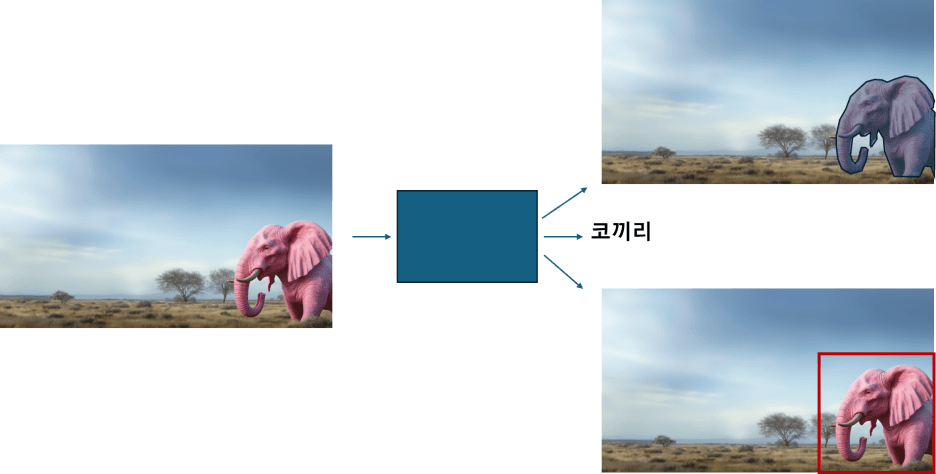

In deep learning, a model can be viewed as a function that takes an input and generates an output. This function may be complex, but fundamentally, it transforms a given input according to certain rules to produce the desired output.

Then what does it mean for a model to “learn”? It refers to the adjustment of numerous numerical values inside the model, known as parameters, through the training process. As training progresses, these values more accurately reflect the relationship between inputs and outputs, enabling the model to make more accurate predictions for new inputs.

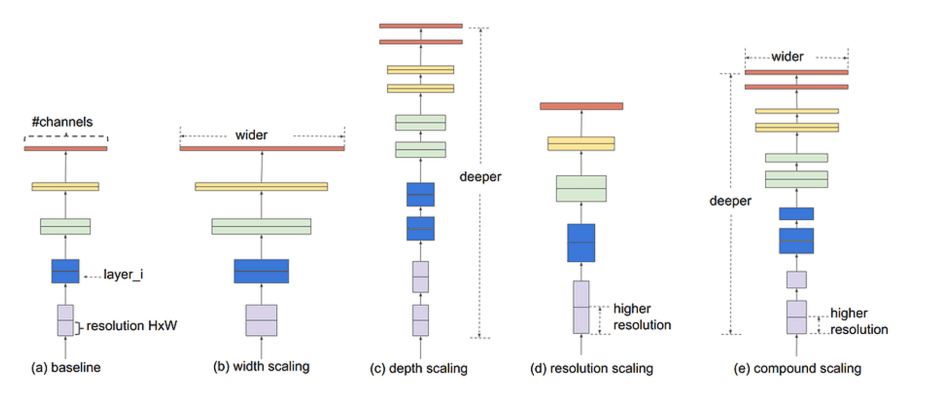

The width and depth of a model are key factors that determine its complexity. Width refers to the number of nodes in each layer, while depth refers to the number of layers. As these values increase, the model can process more information and learn more complex patterns. However, if the model is excessively large relative to the difficulty of the problem, performance may degrade or training may become inefficient. Therefore, the model size should be carefully adjusted according to the complexity of the problem and the characteristics of the data.

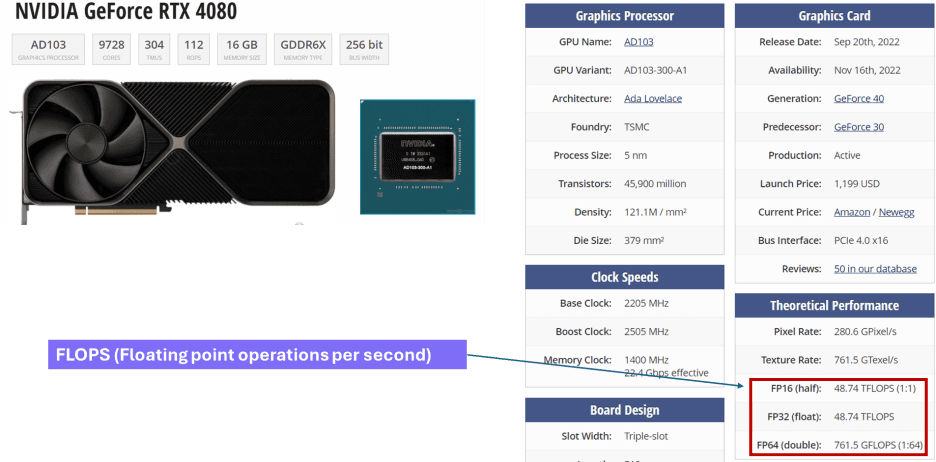

Representative metrics for model size include the number of parameters, FLOPS, and latency.

First, the number of parameters refers to the total number of adjustable values within the model, such as weights and biases. Generally, a larger number of parameters allows the model to store more information and learn more complex patterns. However, if there is insufficient data or if the number is excessively large relative to the problem complexity, it may lead to overfitting.

Next, FLOPS (Floating Point Operations Per Second) represents the total amount of floating-point computations processed by the model and is directly related to computational cost. Higher FLOPS means higher computational cost, but it also enables the model to handle more complex patterns in greater detail.

Finally, latency refers to the time taken from input to output, and it is a critical metric for model size, especially in systems where real-time response is important.

Understanding Deep Learning Models by Type

As deep learning has advanced, various types of models have emerged. Let’s briefly look at the characteristics and differences of representative deep learning models: CNN, RNN, and Transformer.

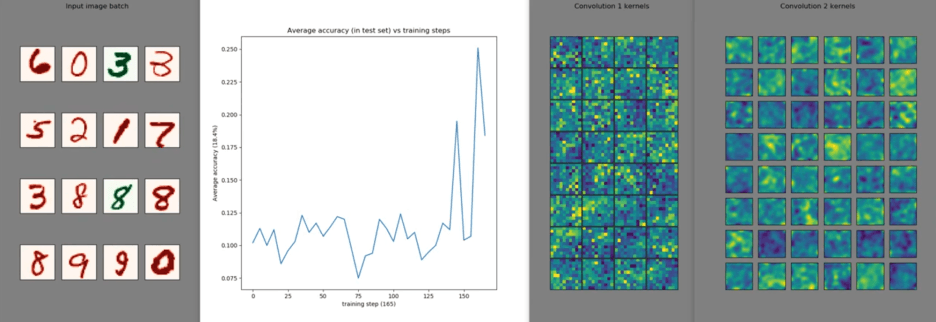

First, CNN (Convolutional Neural Network) is a neural network architecture specialized for image processing and is effective at extracting local features from input images. It learns while preserving spatial structure through convolution operations and operates based on the inductive bias that “spatially close information is highly related.” As a result, it can achieve strong performance even with relatively small datasets and is widely used for complex image and pattern recognition tasks.

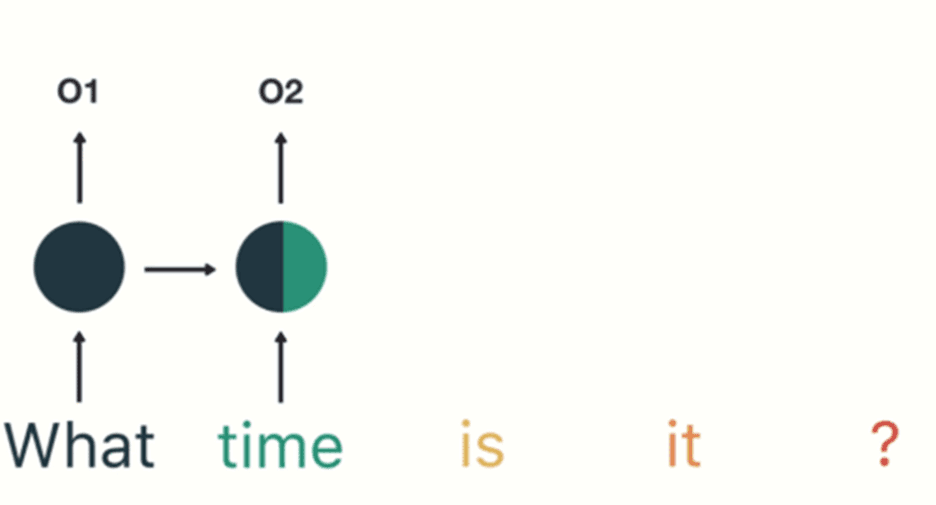

Next, RNN (Recurrent Neural Network) is suitable for processing sequential data (e.g., sentences, time series), as it reflects the order of input data and uses past information to predict the next output. However, it tends to suffer from long-term dependency issues and the vanishing gradient problem as the sequence length increases. Due to these limitations, Transformers are increasingly replacing RNNs.

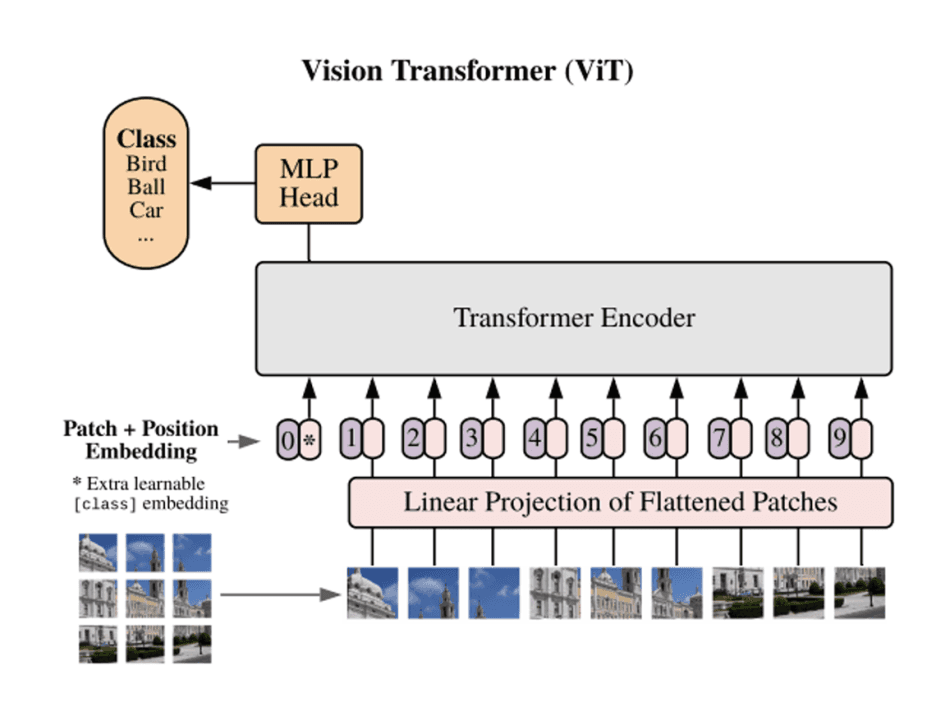

Finally, the Transformer divides input data into small units of fixed size, called patches, and learns the relationships between these patches. As a result, it is well-suited for parallel processing and has a scale-up-friendly architecture where performance improves with increased data and computation. However, it requires substantial computational resources for both training and inference.

Conclusion

In this article, we explored the concepts of machine learning and deep learning, as well as various types of deep learning models. Deep learning models interpret and process data in different ways, producing increasingly refined results through training. We hope this article has helped broaden your understanding of deep learning.

In the next article, we will take a closer look at the training principles of deep learning models based on these concepts.